- About

- Academics

-

Undergraduate Programs

- Civil, Urban and Environmental Engineering

- Architecture and Architectural Engineering

- Mechanical Engineering

- Industrial Engineering

- Energy Resources Engineering

- Nuclear Engineering

- Materials Science and Engineering

- Electrical and Computer Engineering

- Naval Architecture and Ocean Engineering

- Computer Science and Engineering

- Aerospace Engineering

- Chemical and Biological Engineering

-

Graduate Programs

- Civil, Urban and Environmental Engineering

- Architecture and Architectural Engineering

- Mechanical Engineering

- Industrial Engineering

- Energy Systems Engineering

- Materials Science and Engineering

- Electrical and Computer Engineering

- Naval Architecture and Ocean Engineering

- Computer Science and Engineering

- Chemical and Biological Engineering

- Aerospace Engineering

- Interdisciplinary Program in Technology, Management, Economics and Policy

- Interdisciplinary Program in Urban Design

- Interdisciplinary Program in Bioengineering

- Interdisciplinary Program in Artificial Intelligence

- Interdisciplinary Program in Intelligent Space and Aerospace Systems

- Chemical Convergence for Energy and Environment Major

- Multiscale Mechanics Design Major

- Hybrid Materials Major

- Double Major Program

- Open Programs

-

Undergraduate Programs

- Research

- Campus Life

- Communication

- Prospective Students

- International Office

News

SNU Researchers Develop AI Technology That Compresses LLM Chatbot ‘Conversation Memory’ by 3–4 Times

-

Uploaded by

대외협력실

-

Upload Date

2025.10.22

-

Views

1,244

SNU Researchers Develop AI Technology That Compresses LLM Chatbot ‘Conversation Memory’ by 3–4 Times

- Selected as an Oral Presentation (top 0.35%) at NeurIPS 2025, one of the world’s premier AI conferences

- New method “KVzip” reducing chatbot response time and memory cost while preserving accuracy

- Three NeurIPS 2025 papers and one TMLR publication highlight the team’s research excellence

▲ (From left) Professor Hyun Oh Song, and researchers Jang-Hyun Kim, Deokjae Lee, Seungyong Moon, and Jinuk Kim from the Department of Computer Science and Engineering, Seoul National University.

Seoul National University College of Engineering announced that a research team led by Professor Hyun Oh Song from the Department of Computer Science and Engineering has developed a new AI technology called “KVzip” that intelligently compresses the “conversation memory” of large language model (LLM)-based chatbots used in long-context tasks such as extended dialogue and document summarization.

The term “conversation memory” refers to the temporary storage of sentences, questions, and responses that a chatbot maintains during interaction, which it uses to generate contextually coherent replies. Using KVzip, a chatbot can compress this memory by eliminating redundant or unnecessary information that is not essential for reconstructing context. The technique allows the chatbot to retain accuracy while reducing memory size and speeding up response generation — a major step forward in efficient, scalable AI dialogue systems.

The team’s paper, titled “KVzip: Query-Agnostic KV Cache Compression with Context Reconstruction,” was selected as an Oral Presentation (top 0.35%) among 21,575 submissions to NeurIPS 2025, one of the world’s most prestigious conferences in artificial intelligence (H5-index 371*).

* H5-index: A Google Scholar metric representing a publication’s citation-based academic influence.

Modern LLM chatbots perform tasks such as dialogue, coding, and question answering using enormous contexts that can span hundreds or even thousands of pages. As conversations grow longer, however, the accumulated conversation memory increases computational cost and slows down response time.

To address this issue, researchers have developed memory compression methods that enable chatbots to retain only essential contextual information, rather than storing every detail of previous exchanges. However, most existing compression techniques are query-dependent, meaning they optimize memory only for the current question. When a new or follow-up question is asked, the chatbot’s performance typically deteriorates significantly.

To overcome this limitation, Professor Song’s team proposed KVzip, a novel method that effectively reduces the size of the conversation memory in long-context dialogues while maintaining the same level of accuracy. KVzip performs compression by retaining only the information necessary for context reconstruction, allowing the chatbot to handle multiple future queries without the need to recompress its memory each time.

In a wide range of tasks—including question answering, retrieval, reasoning, and code understanding—KVzip achieved 3–4× memory reduction and approximately 2× faster response times, all without any loss in accuracy. The technique also demonstrated scalability to extremely long contexts of up to 170,000 tokens* using major open-source LLMs such as Llama 3.1, Qwen 2.5, and Gemma 3.

*A token refers to the smallest text unit processed by an LLM, such as a word fragment or subword.

Moreover, KVzip maintained stable response quality across multiple rounds of diverse follow-up questions, overcoming the generalization limits of prior memory compression methods. Notably, the technology has been integrated into NVIDIA’s open-source KV cache compression library, KVPress, making it readily accessible for practical deployment.

In the near future, KVzip is expected to be widely adopted in enterprise-scale LLM systems, including retrieval-augmented generation (RAG) pipelines and personalized chatbot services. By reducing memory usage by 3–4× and shortening response latency by about 2×, the method allows servers to handle more concurrent users and longer conversations while significantly lowering operating costs.

Additionally, because the same compressed memory can be reused across different query types, there is no need for recompression at each question, and no risk of accuracy degradation in subsequent exchanges. These properties make KVzip particularly advantageous for mobile and edge environments, where computational and memory resources are limited, enabling stable long-context personalization capabilities even on-device.

Professor Hyun Oh Song, who advised the research, stated, “KVzip is significant in that it enables reusable compressed memory that retains only the most essential information, even in LLM agents requiring long contextual understanding.” Dr. Jang-Hyun Kim, who is the main contributor of the project, stated, “KVzip can be seamlessly applied to real-world LLM applications and on-device systems to ensure consistent quality and improved speed for long-context interactions.”

The first author, Dr. Jang-Hyun Kim, will join the AI/ML Foundation Models team at Apple as a machine learning researcher.

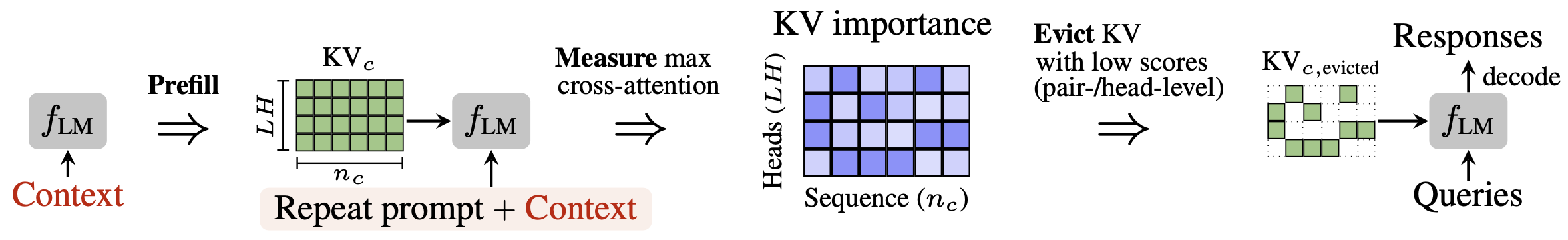

▲ Conceptual illustration of the KVzip approach:

In long conversations, chatbots generate large “conversation memories” (KV). KVzip selectively retains only the information useful for any future question, autonomously verifying and compressing its memory for efficient reuse.

The Machine Learning Laboratory led by Professor Song also had two additional papers accepted as poster presentations at NeurIPS 2025 and one paper published in the journal Transactions on Machine Learning Research (TMLR).

In the NeurIPS 2025 paper titled “Q-Palette: Fractional-Bit Quantizers Toward Optimal Bit Allocation for Efficient LLM Deployment,” the team presented a theoretical analysis of optimal bitwidth allocation across layers in the quantization of large language models and introduced “Q-Palette,” a set of fractional-bit quantizers that realize this optimal allocation.

The method achieved a 36% improvement in inference speed compared to existing quantization approaches at equivalent performance levels.

Another NeurIPS 2025 paper, “Learning to Better Search with Language Models via Guided Reinforced Self-Training,” proposed Guided-ReST, a new reinforcement learning algorithm that enables large language models to autonomously learn improved reasoning and search strategies. On the challenging Countdown reasoning benchmark, Guided-ReST improved accuracy by 10% and reasoning efficiency by 50%.

In addition, the team’s TMLR paper, “Large-Scale Targeted Cause Discovery via Learning from Simulated Data,” introduced a supervised causal inference method for efficiently identifying causal variables of target factors. The proposed method scales linearly with the number of variables, making it suitable for large-scale systems, and achieved state-of-the-art causal discovery performance in gene regulatory network benchmarks.

[Reference Materials]

1. NeurIPS Oral Paper

- Title: KVzip: Query-Agnostic KV Cache Compression with Context Reconstruction

- Authors: Jang-Hyun Kim, Jinuk Kim, Sangwoo Kwon, Jae W. Lee, Sangdoo Yun, Hyun Oh Song

- Link : https://arxiv.org/pdf/2505.23416

2. NeurIPS Poster Paper

- Title: Q-Palette: Fractional-Bit Quantizers Toward Optimal Bit Allocation for Efficient LLM Deployment

- Authors: Deokjae Lee, Hyun Oh Song

- Link: https://arxiv.org/pdf/2509.20214

3. NeurIPS Poster Paper

- Title: Learning to Better Search with Language Models via Guided Reinforced Self-Training

- Authors: Seungyong Moon, Bumsoo Park, Hyun Oh Song

- Link: https://arxiv.org/pdf/2410.02992

4. TMLR Paper

- Title: Large-Scale Targeted Cause Discovery via Learning from Simulated Data

- Authors: Jang-Hyun Kim, Claudia Skok Gibbs, Sangdoo Yun, Hyun Oh Song, Kyunghyun Cho

- Link: https://arxiv.org/pdf/2408.16218

[Contact Information]

Professor Hyun Oh Song, Department of Computer Science and Engineering, Seoul National University / +82-2-880-7272 / hyunoh@snu.ac.kr