loaction

- About

-

Academics

-

Undergraduate Programs

- Civil, Urban and Environmental Engineering

- Architecture and Architectural Engineering

- Mechanical Engineering

- Industrial Engineering

- Energy Resources Engineering

- Nuclear Engineering

- Materials Science and Engineering

- Electrical and Computer Engineering

- Naval Architecture and Ocean Engineering

- Computer Science and Engineering

- Aerospace Engineering

- Chemical and Biological Engineering

-

Graduate Programs

- Civil, Urban and Environmental Engineering

- Architecture and Architectural Engineering

- Mechanical Engineering

- Industrial Engineering

- Energy Systems Engineering

- Materials Science and Engineering

- Electrical and Computer Engineering

- Naval Architecture and Ocean Engineering

- Computer Science and Engineering

- Aerospace Engineering

- Chemical and Biological Engineering

- Interdisciplinary Program in Technology, Management, Economics and Policy

- Interdisciplinary Program in Urban Design

- Interdisciplinary Program in Bioengineering

- Interdisciplinary Program in Artificial Intelligence

- Interdisciplinary Program in Intelligent Space and Aerospace Systems

- Chemical Convergence for Energy and Environment Major

- Multiscale Mechanics Design Major

- Hybrid Materials Major

- Double Major Program

- Open Programs

-

Undergraduate Programs

- Research

- Prospective Students

- Campus Life

- International Office

- Communication

News

SNU Researchers Develop ‘Dynin-Omni,’ a Next-Generation Unified AI Foundation Model That Simultaneously Understands and Generates Text, Images, Audio, and Video

-

Uploaded by

대외협력실

-

Upload Date

Apr 03, 2026

-

Views

851

SNU Researchers Develop ‘Dynin-Omni,’ a Next-Generation Unified AI Foundation Model That Simultaneously Understands and Generates Text, Images, Audio, and Video

- Integrates “reading” and “writing” into a single model, overcoming limitations of existing AI systems such as ChatGPT

- Outperforms existing models across 19 global AI benchmarks, demonstrating world-class performance

- Expected to serve as core intelligence for robotics, AI assistants, and smart devices across industries

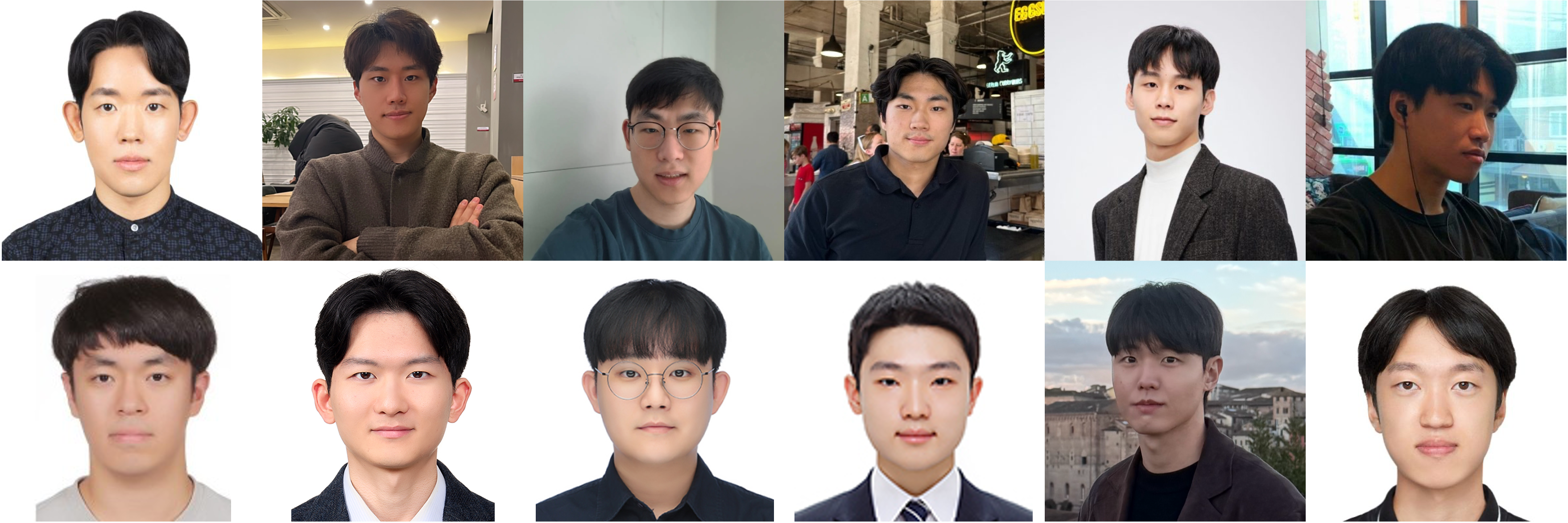

▲ (Clockwise from top left) Jae Young Do, Professor, Department of Electrical and Computer Engineering and Interdisciplinary Program in Artificial Intelligence, Seoul National University; Jaeik Kim, Woojin Kim, Jihwan Hong, Yejoon Lee, Sieun Hyeon, Mintaek Lim, Yun Seok Han, Dogeun Kim, Hoeun Lee, Hyeonggeun Kim, Jin Hyeok Kim

Seoul National University College of Engineering announced that a research team led by Professor Jae Young Do from the Department of Electrical and Computer Engineering (AIDAS Lab) has developed “Dynin-Omni,” a next-generation artificial intelligence (AI) foundation model capable of simultaneously understanding and generating text, images, video, and audio within a single unified framework.

▲ Figure 1. Overview of the next-generation unified AI foundation model “Dynin-Omni.”

The research team designed an innovative architecture that enables an AI model to process all sensory modalities simultaneously, overcoming the limitations of systems like ChatGPT that generate information sequentially. This marks the world’s first realization of a truly “all-in-one” omnimodal* AI that can simultaneously understand and generate all forms of data—from text to video—within a single model. (Figure 1)

* Omnimodal refers to the capability of a single AI system to comprehensively understand and process all types of data in an integrated manner.

This technology is expected to serve as core intelligence across various industries—such as robotics, AI assistants, and smart devices—where AI must simultaneously process multiple types of information and respond in real time.

Recent advances in AI have expanded capabilities beyond text to include images, audio, and video. However, achieving natural interaction with humans requires more than simply “reading” information; it demands complex, integrated interaction capabilities. For example, listening to speech and immediately generating a drawing, or analyzing a video and explaining it verbally, requires “integrated intelligence” that simultaneously utilizes multiple sensory modalities, similar to human cognition.

However, existing AI systems have limitations in coherently integrating multimodal information because they either separate understanding and generation functions or rely on complex pipelines combining multiple models. In particular, implementing a “fully integrated” architecture—where a single model both understands all modalities and directly generates outputs—has remained a significant technical challenge.

▲ Figure 2. Omnimodal training and inference architecture of Dynin-Omni:

(Left) Fully integrated omnimodal training, converting all modality inputs into a shared language space.

(Right) An inference pipeline reflecting modality-specific characteristics, enabling faster and more flexible generation.

To overcome these limitations, the research team successfully developed Dynin-Omni, a next-generation unified AI foundation model designed to process all types of information within a single integrated architecture. The model simultaneously handles text, images, video, and audio, performing both understanding and generation within one unified system. (Figure 2)

Dynin-Omni has three key distinguishing features.

First, it processes all types of information in a unified manner. While conventional AI systems interpret images or audio through text-based representations, Dynin-Omni directly understands all modalities simultaneously under a common framework. This allows for more accurate and organic integration of different data types without intermediate conversion processes.

Second, it employs a diffusion-based approach* that generates the overall output at once and then refines it, significantly improving speed. Unlike traditional models such as ChatGPT that generate outputs sequentially word by word, Dynin-Omni establishes the global structure of the output first and refines it simultaneously. This enables faster and more efficient processing of large-scale data such as video and audio.

* Diffusion-based approach: A method that generates the entire output at once and iteratively refines it through repeated computations to enhance quality.

Third, it integrates understanding and generation into a single model. Unlike conventional approaches that combine multiple AI models, Dynin-Omni performs perception, reasoning, and generation seamlessly within a single intelligence—similar to how humans see, hear, and speak without interruption.

▲ Figure 3. Performance evaluation of Dynin-Omni in omnimodal understanding and generation

Dynin-Omni demonstrated outstanding performance in empirical evaluations. Across 19 global AI benchmarks, it outperformed existing unified models in tasks such as reasoning, video understanding, image generation and editing, and audio processing. Notably, it achieved performance comparable to specialized expert models tailored to specific domains. Furthermore, Dynin-Omni achieved up to 4–5 times faster generation speed compared to existing unified AI models, demonstrating significant advantages in efficiency. (Figure 3)

This research is significant in that it integrates all sensory capabilities—seeing, hearing, and speaking—into a single “brain,” akin to human intelligence. With this technology, AI assistants could evolve to simultaneously understand voice, images, and video, and respond instantly. Additionally, eliminating the need to connect multiple AI models simplifies systems, making services faster and more lightweight.

Most importantly, because the model is designed to process diverse sensory information simultaneously, it can be readily applied across various environments—such as factories, healthcare, and residential settings—without requiring separate model reconfiguration. In particular, Dynin-Omni’s integrated architecture is expected to play a critical role in real-world scenarios where robots must autonomously perceive and act. As a result, this model is anticipated to become a key enabling technology for the era of “Physical AI,” where artificial intelligence extends beyond digital interfaces into real-world applications through robots and smart devices, solving practical problems in everyday life.

Professor Jae Young Do, who led the research, stated, “This study is significant in that it integrates AI’s ability to understand information with its ability to generate outputs, opening the possibility of unified intelligence capable of processing diverse modalities—such as text and images—simultaneously, much like humans.” He added, “Going forward, we plan to expand this research beyond processing data on screens toward technologies that interact with humans in real time and operate directly in the physical world, such as intelligent robots and smart devices that provide tangible benefits in everyday life.”

Globally, leadership in AI research is rapidly shifting from large corporations to innovative, university-driven research. Examples include Tsinghua University’s “GLM” series and the Shanghai AI Laboratory’s “InternLM,” where universities design and train models from scratch to drive national AI competitiveness.

The core researchers behind this work will continue their studies and research at SNU’s Artificial Intelligence and Big Data Systems (AIDAS) Lab, further advancing Dynin-Omni into a more sophisticated and powerful model. In particular, they aim to establish this model as the starting point of a Korean-led unified omnimodal AI series, improving processing speed and accuracy while extending it into a Physical AI model (Dynin-Robotics) capable of serving as the “brain” of robots operating in real-world environments. Furthermore, the team plans to collaborate closely with the domestic research ecosystem to strengthen Korea’s global leadership in omnimodal AI.

This research was supported by the National Research Foundation of Korea through the Basic Science Research Program in Science and Engineering (Outstanding Early-Career Research Program), as well as the High-Performance Computing Support Program funded by the Ministry of Science and ICT and the National IT Industry Promotion Agency.

[Reference Materials]

- Project page: https://dynin.ai/omni

[Contact Information]

Professor Jae Young Do, AIDAS Lab, Department of Electrical and Computer Engineering and Interdisciplinary Program in Artificial Intelligence, Seoul National University / jaeyoung.do@snu.ac.kr